I have used other ETL tools before, but not to the same extent as NiFi.

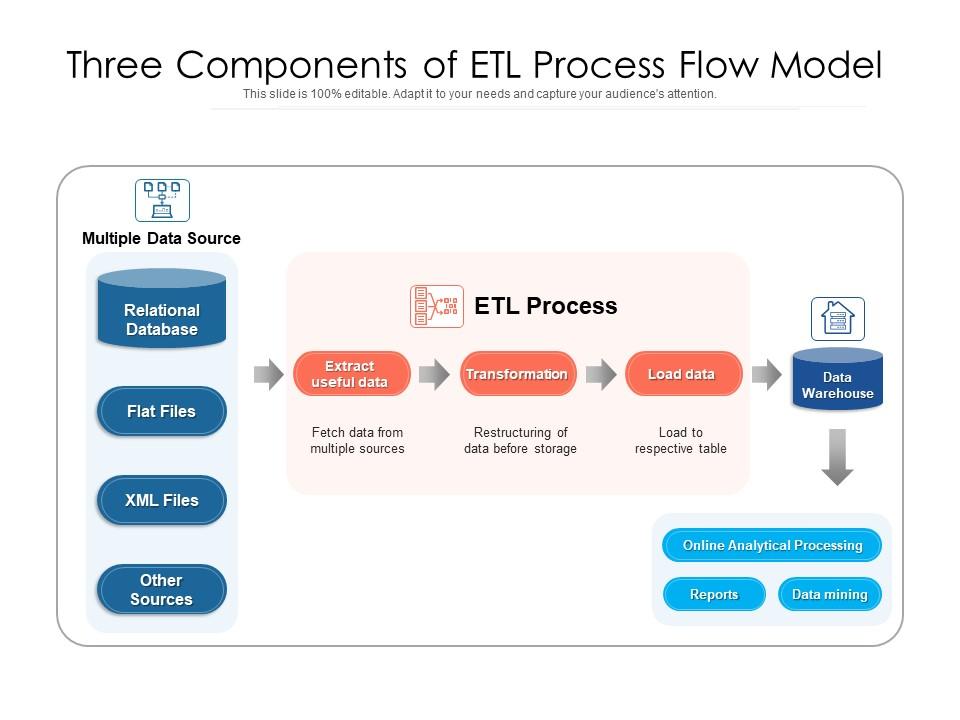

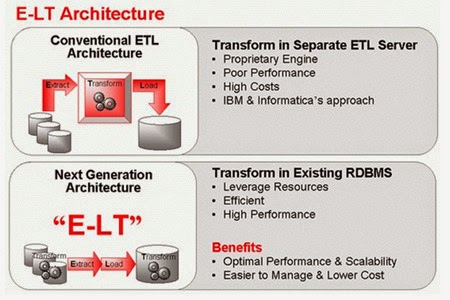

We present the files to the database as external tables and accomplish the initial transformation and load tasks with multi-table INSERTs from the external tables. Where needed you can use other tools to complement it.Įxtra strong full disclosure: I am an employee of Cloudera, the company that supports NiFi and other projects such as Spark and Flink. Our existing ETL processes involve extracting from the source system to 'flat' files and transferring those files to the database's host by FTP. The Role of ETL in the Oracle Communications Data Model Figure 2-1, 'Layers of an Oracle Communications Data Model Warehouse' illustrated the three layers in Oracle Communications Data Model warehouse environment: the optional staging layer, the foundation layer, and the access layer. NiFi is a great tool, you just need to make sure you use it for the right usecase. Ability to perform analysis of performance bottlenecks, propose options, and implement performance tuning recommendations for Oracle database queries/code and batch processes. A typical thing that you would not want to do in NiFi is joining two dynamic data sources.įor joining tables, tools like Spark, Hive, or classical ETL alternatives are often used.įor joining streams, tools like Flink and Spark Streaming are often used. NiFi is really a tool for moving data around, you can do enrichments of individual records but it is typically mentioned to do 'EtL' with a small t. Schema aware, and can share schema with solutions like Kafka, Flink, Spark.It can handle any format, not only limited to SQL tables, but can also move log files etc.Low latency, you can support both batch and streaming usecases.Intuitive gui, which allows for easy inspection of the data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed